By: Zagazola Makama

A disturbing pattern of misinformation targeting Nigeria’s security forces is gaining traction on social media, with some users deploying artificial intelligence tools to fabricate narratives that portray bandits as military personnel.

Security analysts say the latest case draw attention to how manipulated images and misleading interpretations by AI tools are being weaponised to undermine public trust in troops who are risking their lives daily in the fight against terrorism and banditry across the North East and North West.

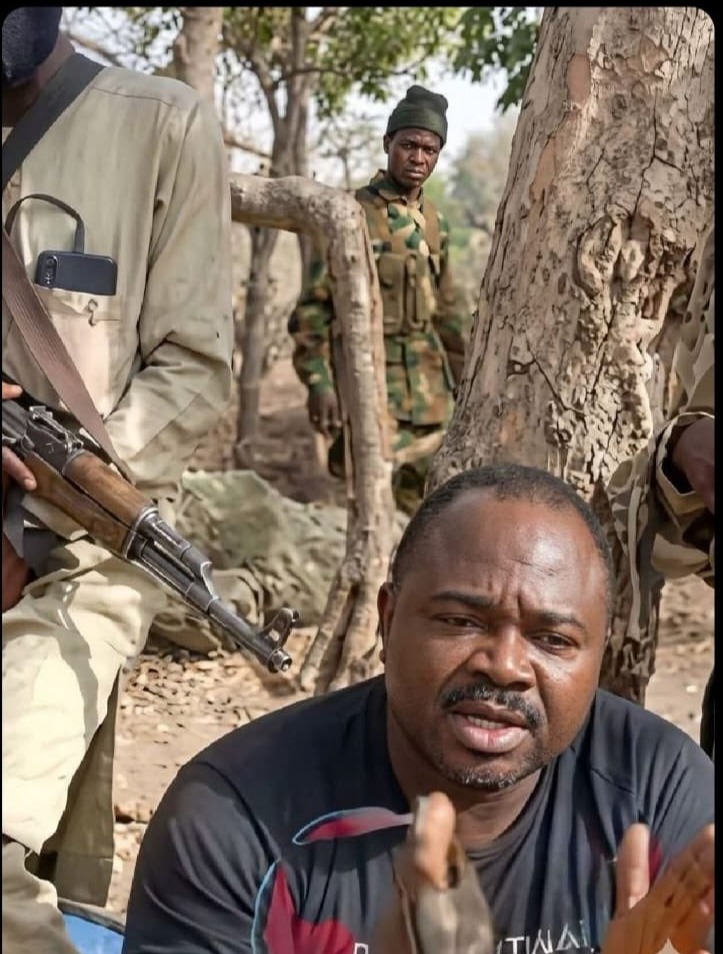

The controversy began after a video surfaced online showing a victim allegedly captured by armed bandits. In the background of the footage, one of the criminals appeared to be wearing a military-style camouflage uniform, a tactic widely known among security reporters and researchers covering banditry in the North-West.

Bandits and terrorists frequently wear camouflage imitation military uniforms to evade detection to mislead communities.

However, instead of analysing the video responsibly, a social media user identified as @Itsbennylee took a screenshot from the clip and reportedly used an artificial intelligence image tool to alter the frame, generating a face designed to resemble that of a Nigerian soldier.

The manipulated image was then posted online with the caption: “The one walking via the back is clearly seen… security operatives over to you!!!!”

The implication of the post was clear, to suggest that the individual seen in the video was a serving soldier collaborating with bandits.

But in a further twist, another social media user asked the AI chatbot Grok to verify whether the screenshot was genuine and whether it came from the original video. The response from the AI system raised further concern.

According to the reply circulated online, the AI tool stated that the screenshot “appears real” and described the footage as a “security operation interview in Nigeria.” Even more troubling, the AI reportedly interpreted the armed bandits in the video as “troops with suspects in the field,” claiming there was “no edit or fake detected in the image context.”

This response clearly reflects a major weakness in automated analysis tools. These AI systems do not actually understand the operational realities of insurgency or banditry. They simply analyse pixels and patterns. If criminals wear camouflage or appear organised, the AI may incorrectly classify them as soldiers.

A Senior Military official said “What we are seeing here is information warfare. Criminal groups and their sympathisers know that if they can portray soldiers as collaborators, they can destroy public trust in the military.

By presenting an altered screenshot and then seeking validation from AI chatbots, misinformation actors are attempting to manufacture credibility for claims that have no factual basis. He warned that such behaviour amounts to digital sabotage of national security.

Another military official called on media professionals to enforced stronger action against individuals who deliberately spread manipulated content capable of undermining national security. This is not just misinformation. It is malicious disinformation,” said the sources.

“Anyone deliberately fabricating images to portray bandits as soldiers is engaging in a dangerous act that could inflame public anger against the military.”he said.

They urged law enforcement and relevant cybercrime agencies to investigate such cases and hold perpetrators accountable.

They also advise the public to approach viral social media claims with caution, particularly when they rely on screenshots, AI-generated images, or unverifiable sources.

As Nigeria continues its fight against terrorism and banditry, analysts warn that the battle is no longer only on the battlefield, it is also being fought in the digital space.